One day I was listening interview with a person who was running software development courses, he was answering questions regarding the software development in different fields and what a full stack developer means. And since our Fitradar system covers several development fields I decided to share my experience about working in one or another field and how easy is to jump from one domain field to another one.

I remember back in a day when I was still a student at university several classes were focusing on business modeling and system implementation according the model. As later I found out this was a common approach for enterprise application design and development. But my first job related to software development was in the company that produced routers and its software. At that time I was writing small scripts to test different router configuration setups, I was not directly involved in router’s software development but I wanted to become a par of a development team. And so I started to explore the routers operating system which was based on Linux kernel as many other embedded systems. I soon discovered that many principles I learned in programming classes are not applied in embedded systems and instead big emphasize is put on performance. For example instead of throwing exceptions error codes were used to improve the performance. In mobile and enterprise applications that would be considered as a bad practice. Another odd thing for me was to see that there are no unit tests only integration or end to end tests, which again in enterprise application world would be considered very bad. And so at that moment it hit me that the way how one or the another system is developed depends from the domain field and not that much on the language. So for myself I distinguished following software development fields:

- web front-end development

- mobile application development

- desktop application development

- game development

- embedded systems development

- enterprise application development

This is by no means the full list of the domain fields where the software is developed. These are just areas I have come across in my developers career. There are many principles that cover all the fields and that is what every programming class starts with, like variables, loops, conditions, functions, but once we need to organize bigger code base the principles start to vary. In our Fitradar project we are developing two big applications: mobile application for Android and iOS and back-end system that gradually evolves in full scale enterprise application. The approaches in some parts of both systems are similar but some parts have quite different goals. So in this article I wanted to sum up the differences and common things approaching the mobile app and back-end app development in Fitradar solution. As mentioned earlier those differences really start to show up when the code base starts to become so big that you need some extra time to navigate within it. And if you don’t follow any code organization principles the time you will need to navigate around, understand and modify will grow proportionally and sometime even exponentially to the size of the code base. So for big systems we really need to organize our code. And almost all my previous articles about development were dedicated to the different approaches on how to better organize the code. The one thing I discover again and again is this – although it is important to know the principles like Object Oriented programming, SOLID and design patterns but just as important is to apply those principles only there where is needed. I remember one web project where front-end part was developed in Angular but back-end in ASP.NET MVC. Our team took over the project from the other company and had to continue to extend it with new features. When we worked with back-end part it was easy to understand and modify it because it followed well established enterprise application best practices. But we really struggled with front-end part because the code was organized according the same principles as in a back-end part and it looked like developers were ignoring many Angular built-in features and principles. And only later we found out that the developers who mainly worked with back-end designed the front-end application as well. This approach would have worked fine if the front end had been very simple application but it was so big that in order to organize the front-end code it required a knowledge of principles specific only to User Interface. And from my experience for a developer new in a domain field to start to produce decent design takes about a year and more, not counting the time needed to master the programming language itself. So therefore for big web application projects there are usually front-end and back-end developers. For smaller web applications the same knowledge about the programming might be enough. So let’s see where the focus in back-end and mobile application lays in:

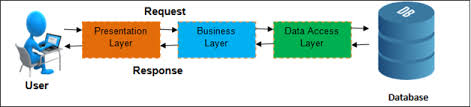

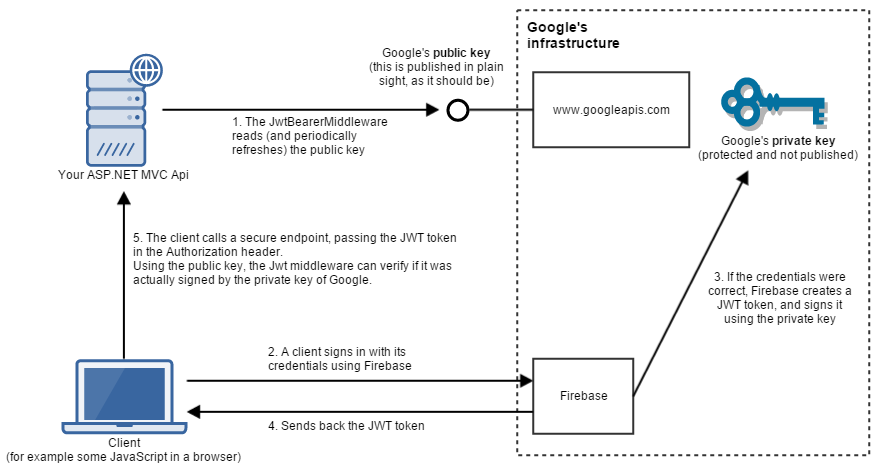

- as you can imagine even the simplest mobile app has an UI (there are some special background apps but we will not consider them here) and therefore the emphasize in mobile application in first place will be on the UI code organization and how to connect UI with the rest of the application. The UI will be the part of the system that takes the input from a user and displays the information to the user. From the other hand back-end interaction with the outside world will be via REST (or maybe GraphQL) web services where data is received and send in well formatted way. And formatting usually is done by back-end third party library. Since UI can be very complex then we need to consider principles, practices and patterns that are specific only to UI development. And that is where pure back-end developers lack the knowledge. And if no data is used then mobile app might be limited just to UI and for back-end developer that would mean that very little knowledge can be transferred from back-end development. But in case mobile application works with data stored either locally on mobile device or on a back-end server the extra layer of data persistence might be required.

- Data layer development in mobile app and on the back-end might seem very similar. And indeed if we choose to store data on a mobile device we can chose database for this purpose and use the well known patterns like Repository and Data Access Object as we do on the back-end server, then it might give the impression that mobile app developer can develop this part of the system for both mobile and back-end application. But my experience shows that it is true only to some extent. On the mobile app data persistence layer always should be simple, because the hardware resources are very limited and it is unwise to build large scale database on the mobile device, instead data are transferred to back-end server and stored there. Database on mobile devices often is used as a cache store, where data are denormalized and structured purely for UI needs. On the other hand the back-end database design is a big thing where data normalization and performance is considered. And this time pure front-end developers might lack the knowledge of complex persistence layer design.

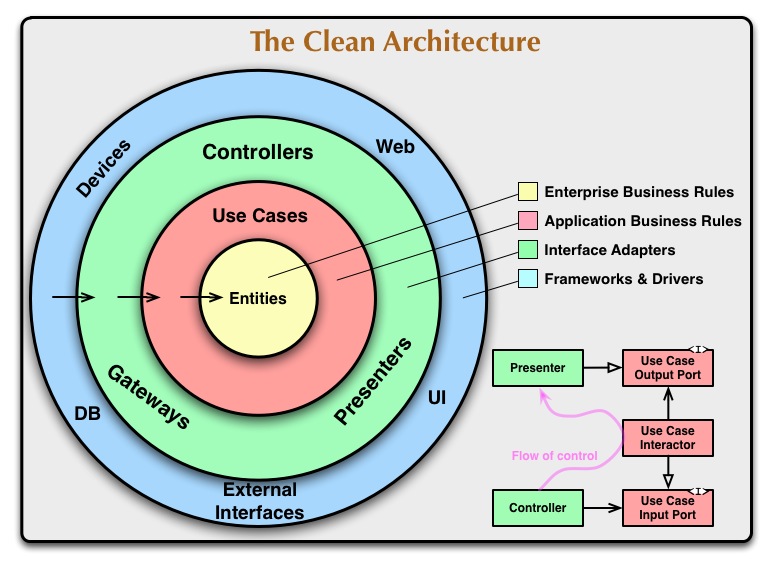

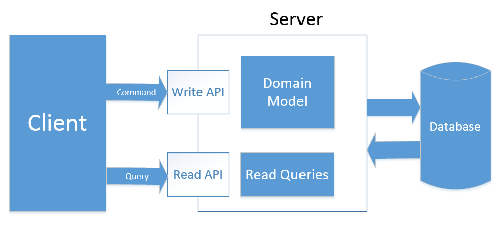

- And when the system’s complexity grows more layers on the back-end start to emerge, like Domain layer and Event Bus. Inner details of Domain logic usually is something that people don’t want to expose to the outside world therefore it is implemented only on a back-end server. Domain logic implementation might require a lot of specific design patterns and practices. Which again only back-end developers might be aware of.

So at the end of the day we can still apply general software development knowledge across the domains as long as the code base stays small and simple. And that is why even high school student can produce decent software in any field as long as that peace of software is small. But once the system grows big particular domain expertise starts to become crucial, and that domain expert knowledge comes only over the years as a result of learning and practice.

Please visit our website: http://fitradar.me/ and join the mailing list! Our app is coming soon.